20 Mar Web MCP – The Future AI Integration with Web Application

The rapid adoption of large language models across enterprise software has exposed a structural problem. Each application that seeks to leverage AI capabilities must build its own integration layer – a custom bridge between a model and the application’s data tools and workflows. The result is a fragmented landscape of incompatible connectors, inconsistent security boundaries, and mounting maintenance overhead.

Model Context Protocol (MCP) was designed to address this problem precisely. It provides a vendor-neutral, open standard that defines how AI models communicate with external tools and data sources. Rather than defining how AI models communicate with external tools and data sources. Rather than negotiating a new integration contract for every service, developers implement a single protocol once and gain interoperability across the entire ecosystem.

For organizations running HCM systems – where data flows across recruitment, performance, payroll, scheduling, and compliance – MCP offers a coherent architecture for bringing AI into day-to-day operations without rebuilding existing infrastructure.

What is Model Context Protocol?

MCP is an open specification published by Anthropic in late 2024 that standardizes the interface between an AI model and its external environment. It defines how a model can discover available tools, request data from external sources, and receive the results of actions – all within a structured, inspectable exchange.

The most commonly cited analogy in technical documentation is that of a universal connector. Where USB-C allows a single cable to carry power, data, and video across devices from different manufacturers, MCP allows a single integration pattern to connect an AI model to databases, APIs, file systems, and business applications regardless of vendor.

MCP is not specific to any one model or provider. It is designed as a foundation for the broader ecosystem of AI-powered tools, making integration portable and reusable across different model versions or even different AI providers.

How Web MCP works

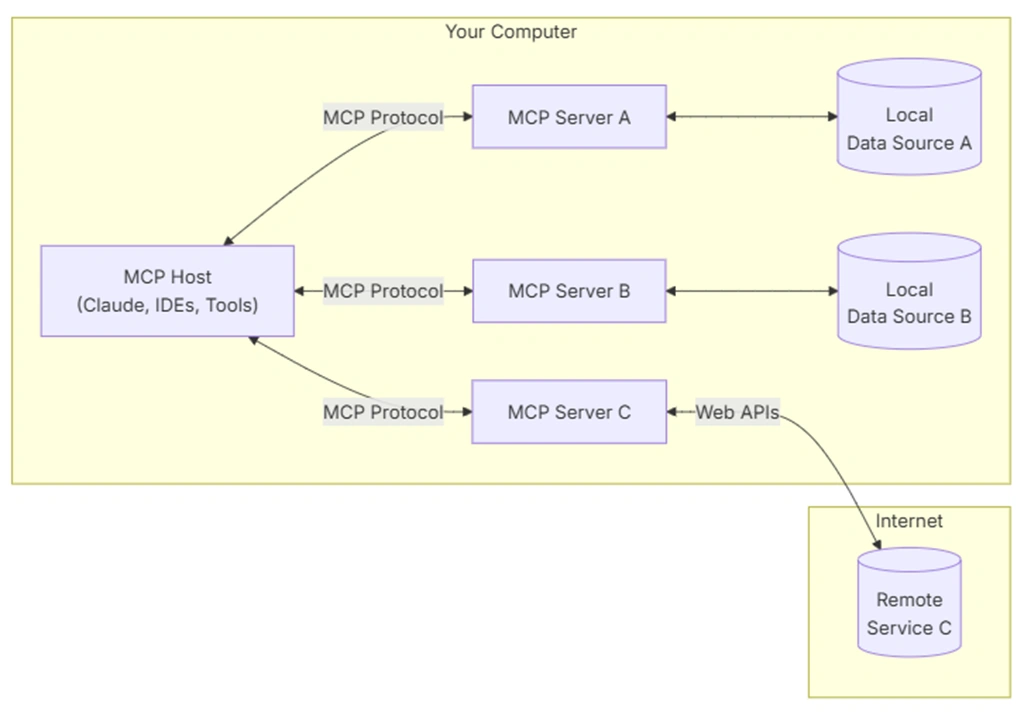

The protocol defines three primary roles: the Host, the Client, and the Server.

The Host is the environment in which the AI model runs – typically a desktop application, a web interface, or an autonomous agent runtime. The Host manages user sessions and is responsible for enforcing access policies.

The MCP Client is a component embedded within the Host. It handles the protocol-level communication: discovering available servers, negotiating capabilities, and routing requests. A single Host may connect to multiple MCP Clients simultaneously.

The MCP Server is an independently deployed process that exposes capabilities to the model. A server can represent a database, a business application, a file system, or any other external system. It is through servers that the model gains access to real-world context and the ability to act on it.

The three pillars of the protocol

MCP organises the capabilities exposed by a server into three categories:

- Tools – executable functions that the model can invoke to perform actions, such as querying a database, submitting a form, or sending a notification

- Resources – read-only data sources that provide the model with context, such as employee records, document contents, or calendar entries

- Prompts – reusable instruction templates that guide the model’s behavior for specific tasks, defined by the server and surfaced to the user

Transport layer

In web environments, MCP communication typically runs over HTTP using Server-Sent Events (SSE) for the server-to-client direction, combined with standard HTTP POST requests for client-to-server messages. This pairing allows for streaming responses while remaining compatible with standard web infrastructure, including load balancers, proxies, and cloud hosting environments.

Local integration – such as connections to desktop applications or command-line tools – uses standard input/output streams instead, keeping the protocol lightweight in contexts where HTTP overhead is unnecessary.

MCP vs. traditional REST API integration

The question of when to use MCP rather than conventional REST API integration is one of scope and architecture. REST APIs remain well-suited for discrete, well-defined operations between two systems with a stable interface contract. MCP is designed for a different scenario: one where an AI agent must dynamically discover what is available, reason about which capabilities to use, and chain multiple operations in sequence.

| Aspect | REST API | Model Context Protocol |

| Integration model | Point-to-point, custom per service | Standardized, universal connector |

| Configuration effort | Hugh – each API requires separate setup | Low – single protocol, reusable adapters |

| Context awareness | Stateless by default | Context and session state built in |

| Scalability | Grows linearly with number of services | Scales uniformly across all connected tools |

| AI agent support | Requires custom orchestration layer | Native – agents discover and call tools autonomously |

| Security model | Defined per integration | Requires a custom orchestration layer |

The key distinction lies in the consumer. A REST API is designed to be called by deterministic code with a predetermined call graph. MCP is designed to be called by a model that will itself decide which tools to invoke, in which order, based on a natural language objective. This requires the protocol to carry richer metadata about capabilities, expected inputs, and potential side effects.

Applications of Web MCP in HCM Systems

HCM systems are, by nature, data-rich environments. They hold structured records across the entire employee lifecycle – from the first touchpoint in recruitment through to offboarding and alumni relations. This density of context makes them particularly well-suited to AI augmentation via MCP.

Recruitment process automation

An MCP server exposing the recruitment module allows an AI assistant to search candidate profiles, compare qualifications against a job description, schedule interviews, and draft communication – all in response to natural language instructions from a recruiter. The model does not need hard-coded knowledge of the system’s data model; the server exposes its capabilities through the protocol, and the model discovers them at runtime.

Employee data retrieval

Queries such as “Show me all employees on fixed-term contracts expiring before the end of Q3” can be handled by a model with access to an MCP resource that exposes contract data. The model translates the natural language query into a structured tool call, receivers the results, and presents them in a format appropriate to the user’s context – whether a summary table, a narrative report, or a prioritized list of actions.

AI assistant across core modules

With MCP servers mapped to individual modules – leave management, performance reviews, onboarding, payroll – a single AI assistant can operate across the entire systems without requiring a monolithic integration. Each server manages its own scope, and the module composes capabilities from multiple servers to fulfill complex requests.

A manager asking “What is the leave balance for my team this week, and are any performance reviews needed to be submitted before Friday?” is, in protocol terms, issuing a request that the model will resolve by calling two separate MCP servers and synthesizing the results.

Reporting and analytics

MCP enables a shift from static, pre-built reports to conversational analytics. Rather than navigating a reporting interface and selecting parameters, a user can describe what they need, and the model will determine which data resources to access, apply the appropriate aggregations, and return a structured result. This is particularly valuable for ad-hoc queries that do not fit existing report templates.

Security and access control

A common concern with any protocol that gives an AI model access to production systems is the question of authorization: what can the model do, on whose behalf, and with what level of oversight?

MCP addresses this at the protocol level. Each server defines a permission boundary. The Host is responsible for authenticating the user and determining which servers the user is allowed to connect to. Servers, in turn, can expose only the subset of their capabilities that the current session is authorized to use.

The principle of least privilege applies directly. A model assisting a recruiter has access to recruitment data through the relevant server, but not to payroll records or administrative settings. The boundary is enforced by the server, not by prompting conventions.

Because all tool calls and resource accesses are discrete, inspectable requests, MCP-based integration also supports comprehensive audit logging. Every action the model takes is traceable to a specific request, a specific user session, and a specific timestamp – a property that is difficult to achieve with less structured integration patterns.

MCP and the future of HCM systems

The significance of MCP extends beyond its immediate utility as an integration standard. It is a structural enabler for a class of AI applications that have not been practical to build: autonomous agents that operate across multiple systems, maintain context across sessions, and take sequences of actions in pursuit of a defined goal.

In the context of HCM, this points toward systems where routine administrative work – contract renewals, onboarding task coordination, policy compliance checks – is handled by agents operating within defined authorization boundaries, escalating to human review only where judgment or exception handling is required.

The standardization that MCP provides is a prerequisite for this architecture. Without a common protocol, each autonomous workflow requires bespoke integration work. With it, the focus shifts to defining the boundaries within which agents are permitted to act and to the design of the workflows themselves.

MintHCM’s development direction reflects this trajectory. As MCP adoption widens across the broader software ecosystem, the capacity to expose HCM capabilities through the protocol – and to consume capabilities from adjacent systems such as communication platforms, document management tools, and external data providers – becomes a meaningful architectural differentiation.

Summary

MCP resolves a foundational challenge in enterprise AI adoption: how to connect models reliably to the systems where work actually happens, without rebuilding those systems or accepting a proliferation of custom integration.

Its architecture – a clear separation between hosts, client, and servers, with well-defined primitives for tools, resources, and prompts – provides a stable foundation for building AI capabilities that are secure, auditable, and composable.

For HCM systems, the practical implications are substantial. MCP makes it possible to bring conversational AI into recruitment, workforce planning, performance management, and reporting without requiring deep modifications to existing infrastructure. The integration boundary is well-defined, the permission model is explicit, and the behavior of the model is, at each step, traceable and inspectable.

As both AI capabilities and enterprise expectations continue to develop, protocols that enable structures, governed access to business systems will become central to how organizations deploy AI responsibly and at scale.